Reproducibility of science and its role in scholarly peer review

Peer Review Week 2016, Article #3

Looking at recent literature on the subject, more and more it appears that “reproducibility” should be one of the (key ?) components of peer review. In addition to providing an assessment (based on the reviewer’s judgement) of the scientific relevance and validity of the “published” research results, a reviewer should also attempt to “reproduce” the results presented in the “publication”, or at least ensure that the original researchers made available all elements (e.g. protocol, data, software) needed to reproduce the results.

Results that cannot be reproduced by independent analysis hardly can be considered relevant advancements in a field of study. Nonetheless, there are today many studies showing that a considerable number of research findings, published in well-known peer-reviewed scientific journals, could not be reproduced. As a reference, consider one of the latest studies [1].

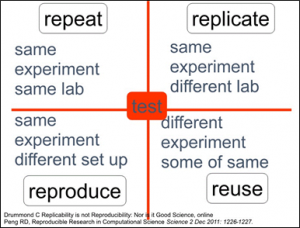

But what is meant by “reproducibility”? And how its meaning changes among research domains? For the first question, a starting point may be provided by the diagram below, that somehow defines four levels of “reproducibility” (thanks to Carol Goble for her chart [2]).

We move from repeat (clearly not part of peer-reviewing and in some cases – as reported in the literature – impossible even for the same team that conducted the original research) to replicate (successful in a small percentage of attempted cases, according to literature) to reproduce (successful in a slightly higher percentage of attempted cases) to reuse (and pushing the research towards producing new scientific knowledge, which should be the ultimate goal of scientific research). The amount (and format) of information to be made available to other scientists in order for them to perform one of the above actions clearly depends on the action to be attempted and the specific field of study.

The second issue, rarely mentioned in the literature, is that the possibility of performing one of the above actions heavily depends on the field of the research. Without entering into the long-standing debate about Hard Science and Soft Science (see for example the entry “Hard and soft science” in Wikipedia) it is clear that we may think of two extremes. On one extreme we have a research flow (data and process) which is objectively defined, allowing perfect replicability and enabling reproducbility; on the other extreme we have a research flow based on data which are the personal observations of the researcher (e.g. on some ancient artefact) and the process is the reasoning and the conclusions that the researcher has drawn from that data. In the latter case we might have at most a reuse, assuming that the subsequent researchers agree with the original conclusions.

To conclude, as a prerequisite to include reproducibility in the peer review process, it would be nice to have some kind of guidance (a classification, a taxonomy ?) in order for the reviewer to be able to understand which “level of reproducibility” would be possible and if the published information (results, data, process, etc) are enough to attempt a replication.

References:

[1] http://www.nature.com/news/1-500-scientists-lift-the-lid-on-reproducibility-1.19970

[2] http://www.slideshare.net/carolegoble/ismb2013-keynotecleangoble