Wisdom of the crowd for scientific review

Public opinion as a complement to the peer review system

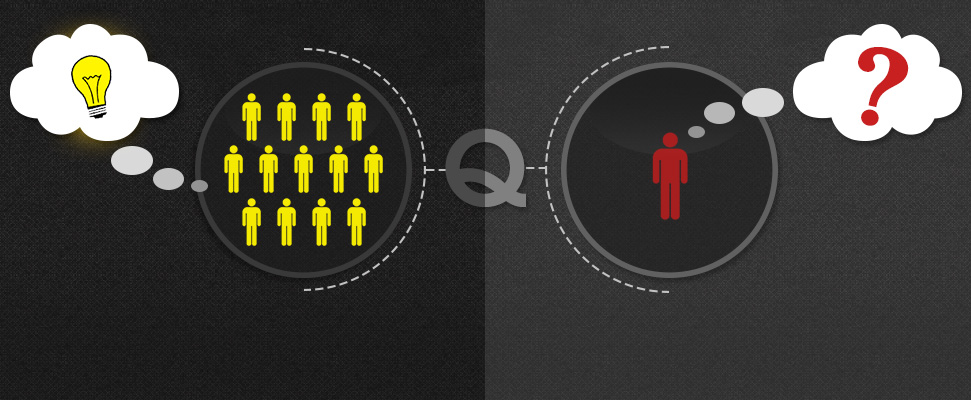

In the era of consumerism and social media, reputation and public opinion are key factors for purchase decisions. Almost everyone relies on reviews and comments when choosing a restaurant, car or place to live. Companies like Google have adopted public reviews as an important resource to share with customers, in addition to professional reviews. This powerful tool for decision making is related to the concept of "Wisdom of the crowd". It refers to the scientific principle that the average opinion or solution from a group of individuals usually performs better than the majority of solutions provided by a few individuals or experts.

However, scientific journals only rely on the opinion of "experts" to evaluate the quality of journals and decide whether to publish them, after numerous revisions, or reject them. Though fundamental and useful, it is well known in the scientific community that the peer review system it's very inefficient and sometimes victim of pure randomness:

- Cases of randomly-generated papers reviewed and published by scientific journals have become public during the last years: link and link.

- Papers are usually reviewed 2-3 scientists, and the probabilities that personal beliefs and subconsciously biases heavily influence the final decision are very high.

- Nowadays, papers tend to include authors from different fields like medicine, statistics, computer science, biochemistry, genetics, etc. Having a few reviewers reduces the probability of a detailed evaluation of all the analyses and figures in the manuscript, since reviewers tend to be experts in a particular topic. By increasing the number of evaluators it is more likely that all aspects of the manuscript will be reviewed correctly by several experts.

- The average review time is around 4-5 months but these estimates ignore the fact that authors usually need to submit their work to multiple journals before final acceptance. Generally, scientific papers tend to take around 1 year in order to be published and in Science those times are unacceptable since most of fields advance very quickly. It is quite common that, by the time authors publish a manuscript from a particular project, they have already been working for weeks or months on a different or a second project. This problem is quite critical for PhD students that must publish a certain amount of papers in 3-4 years before they defend the thesis.

- Referees are usually overworked and it’s a common practice for them to give the manuscripts to students or postdocs to perform the review itself (breaching, among other things, the confidentiality of the process).

- Journals also reject papers because of reasons not directly related to the manuscript like lack of reviewers.

Given these problems, it is critical to improve the review system to boost its efficiency and speed.

In the current scientific publication system, number of citations and impact factor are two of the key elements that authors take into account before reading a scientific paper among the numerous that are published every day. However, these metrics also have their limitations and misinterpretations. Furthermore, estimates indicate that only a minority of published papers have been cited but that a significantly higher percentage of publications have actually been read by someone. Another fundamental problem of this system is that an author simply does not cite a paper that he/she does not liked after reading it, and his/her valuable opinion is lost.

Here is where public opinion and the “Wisdom of the crowd” could help. Keeping track of the judgement of each reader about a paper is a powerful measurement of it’s quality and significance. It would be crazy to think that every single product on eBAY should be reviewed by an “expert” before making it available to the public, on the contrary, public review after the products have been made available is a more efficient and faster way to select high quality items; and after some time, if the quality of the product is poor, it is removed from the market. This system could be implemented in the scientific community (like in bioRxiv), so authors could rate and review all the papers they read, for example by including a 5-star system similar to the one we can find in Amazon.

This way, journals could adapt their publication methods to, for example, only perform editorial modifications and minimal quality check when they receive a paper and allow it’s public evaluation by a panel of experts (like certified reviewers) and general readers once it’s published, this concept is commonly known as “post-publication review”. Some advantages over the current pre-publication review would be:

- Required time to publish a manuscript would be greatly reduced since authors would publish directly in the journal of their choice (after some necessary checks).

- The consequences of randomness and biases imposed by a few “experts” reviews would be diluted in the average opinion of a higher group of reviewers.

- Faster accessibility by the community to papers.

- Costs reduction for journals.

- More comprehensive reviews due to the huge number of evaluators to assess the quality of publications.

Every system has their own limitations and the post-publication review it’s not an exception. For example, some details of this system should be discussed, like allowing anonymous opinions or stratifying readers and their ratings by their experience or field. Additionally, the general population of scientists might tend to frequently read and evaluate more manuscripts from famous authors than non-famous ones. However, this fact already happens with the current system, where readers and reviewers tend to be influenced by the name and affiliations of the authors. It’s just a matter of evolution, we cannot keep using a system that is hundreds of years old. Similar approaches have actually been tested, like crowd reviews.

The overall conclusion is that scientific review should be a more open process and should involve the whole community, not only a hand-picked set of “experts”. An example of open science having a significant impact is Foldit, where literally anyone can help in the fight against diseases like HIV.

Victor Venema (@VariabilityBlog)

The more I think about it, the more I like post-publication peer review. I do would like to restrict the quantitative assessment to people with actual expertise. But it others would like to add comments that could still be helpful.

It would also be important that every article is reviewed. Current post-publication system either focus on bad articles or only collect and recommend the best ones.

What do you think of the post-publication system I am setting up?

https://homogenisation.wordpress.com/

reply